A Unified Logical Layer For Data with no ETL

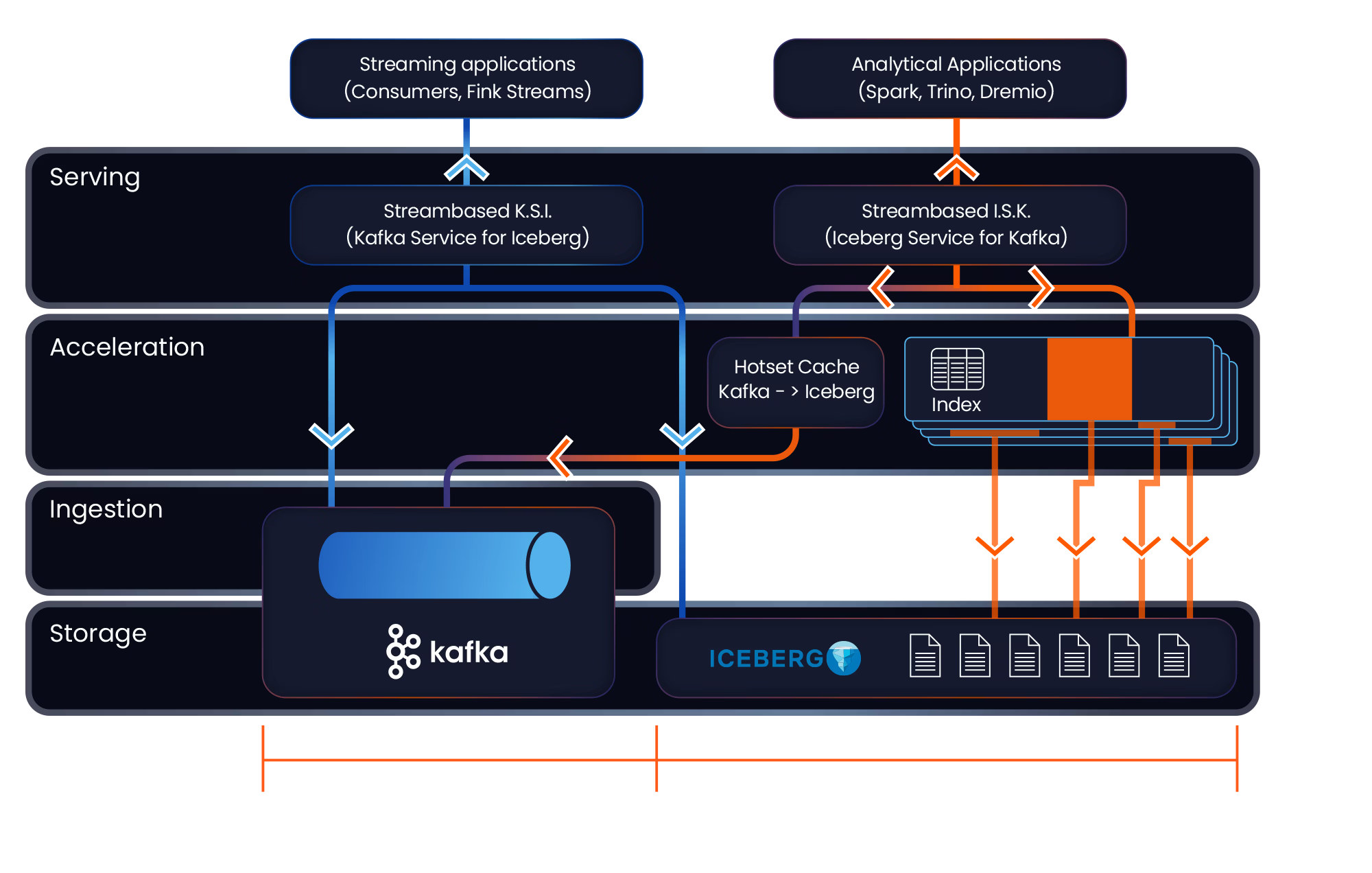

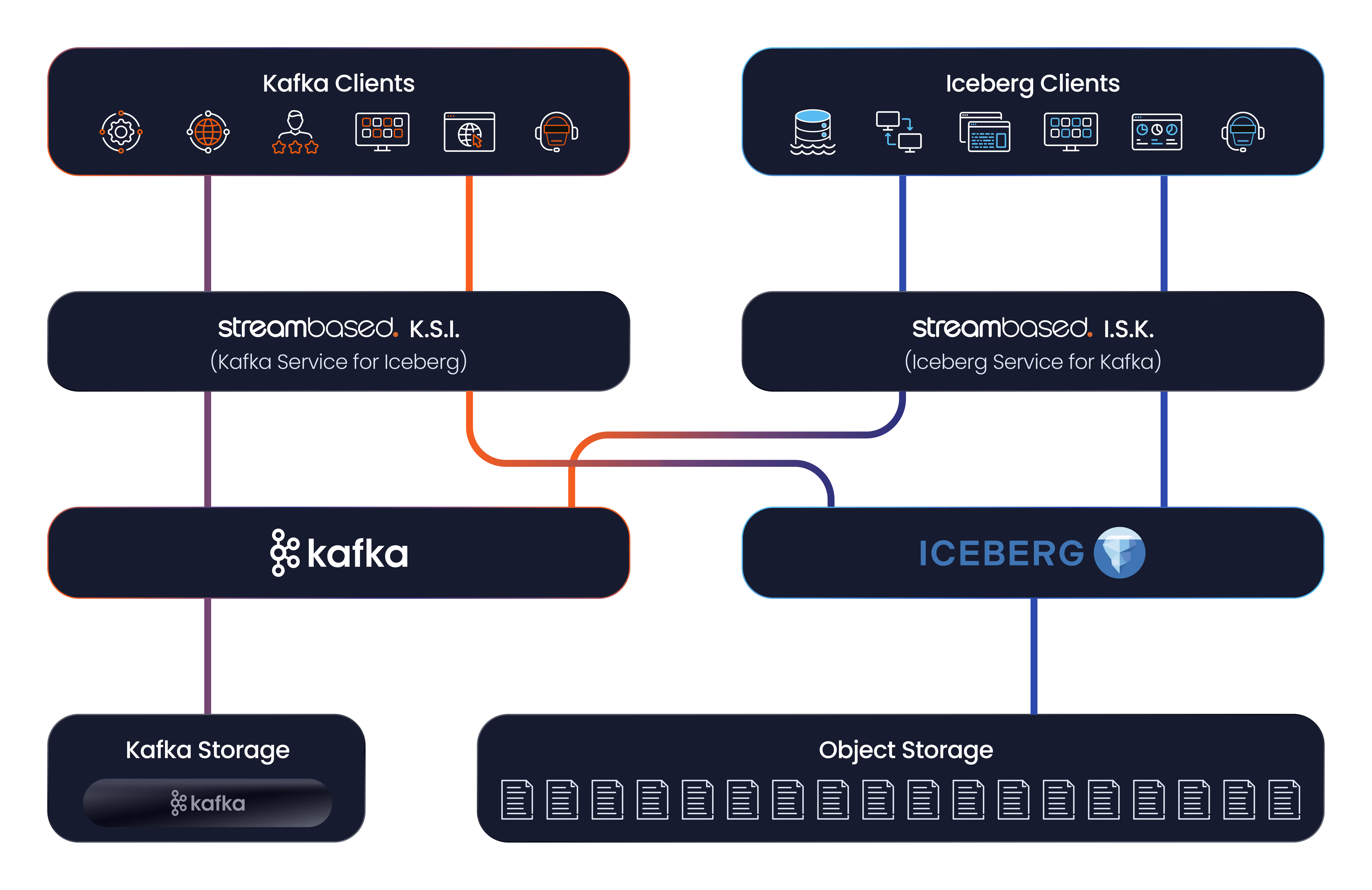

Streambased Platform consists of 2 services:

I.S.K. - One Table Across All Time

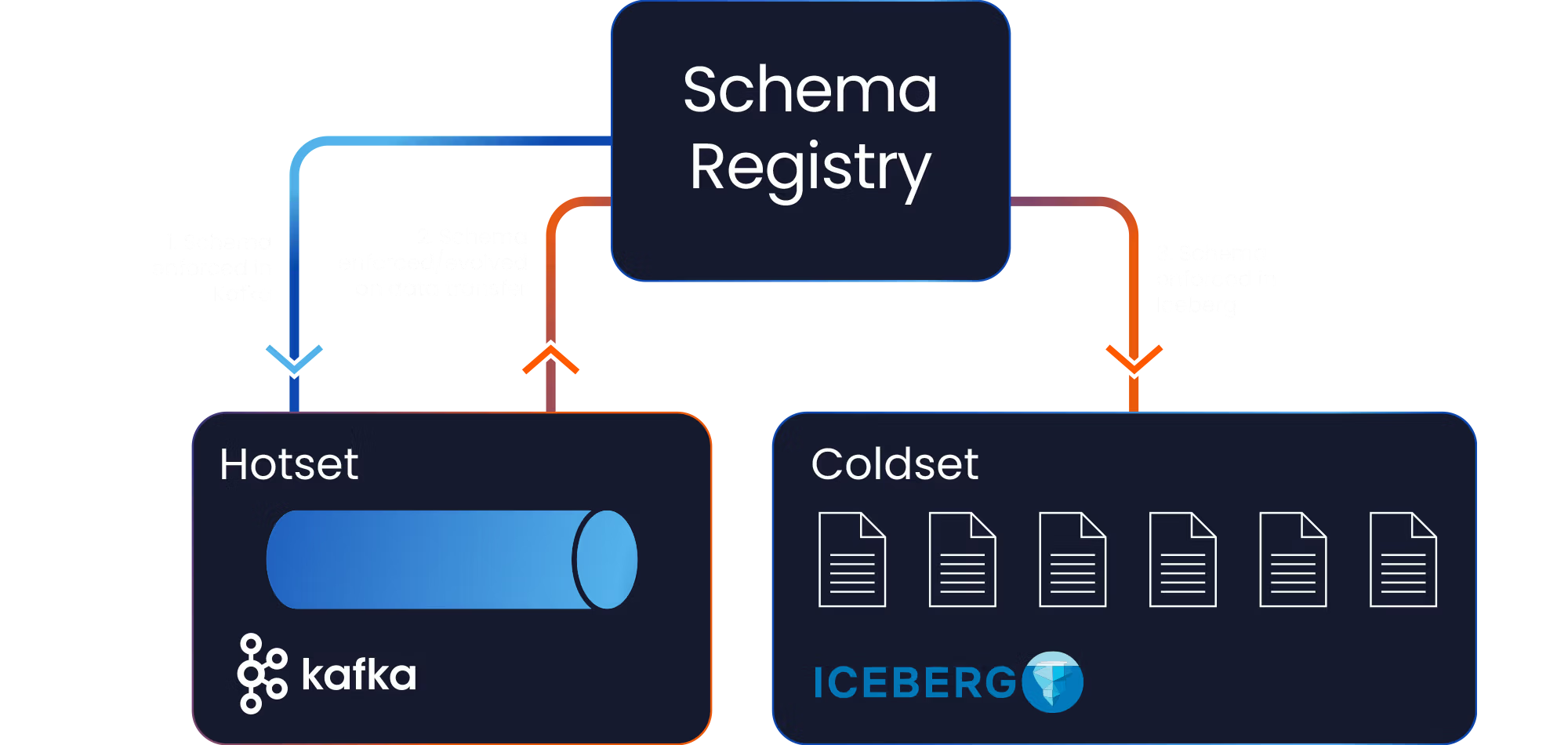

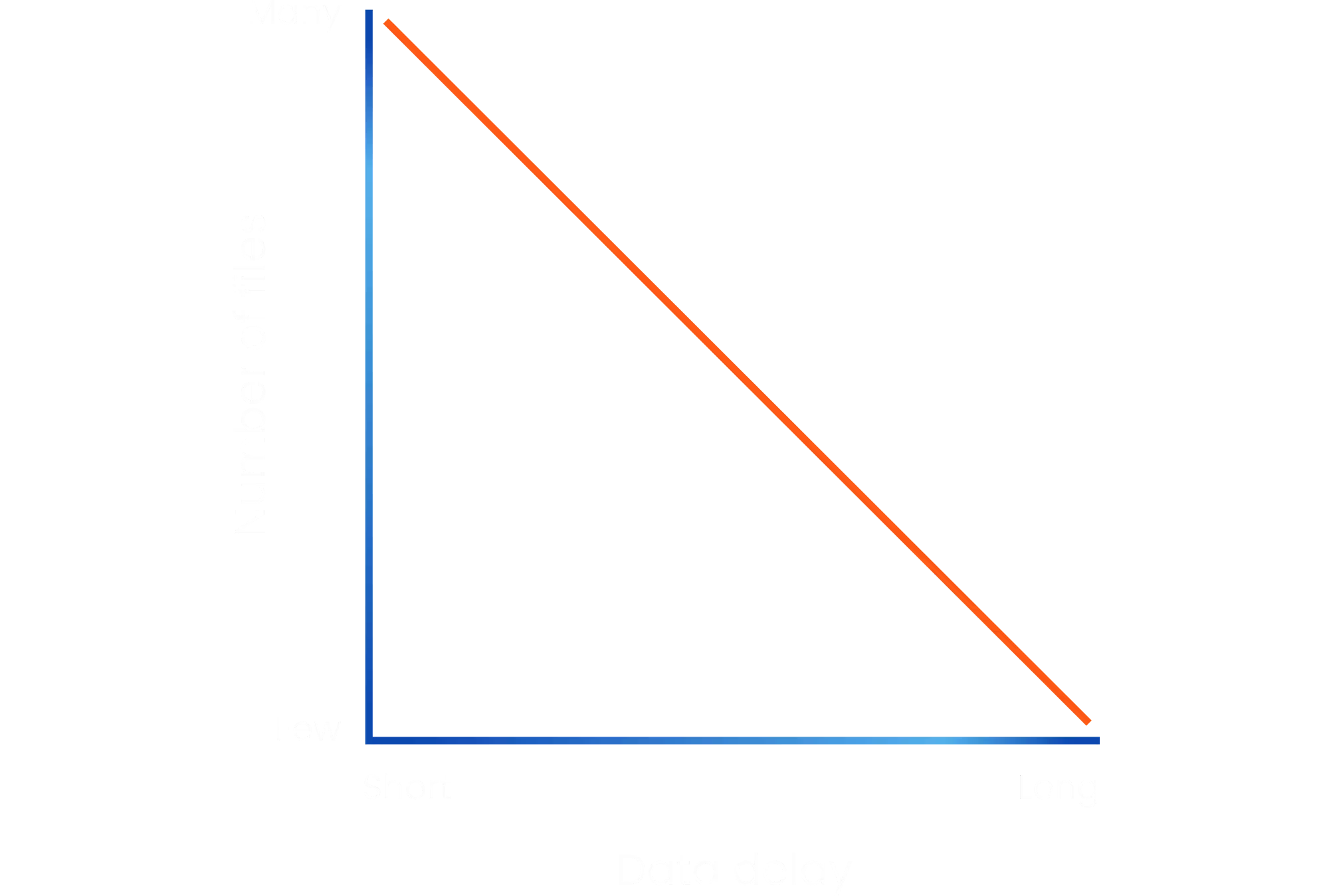

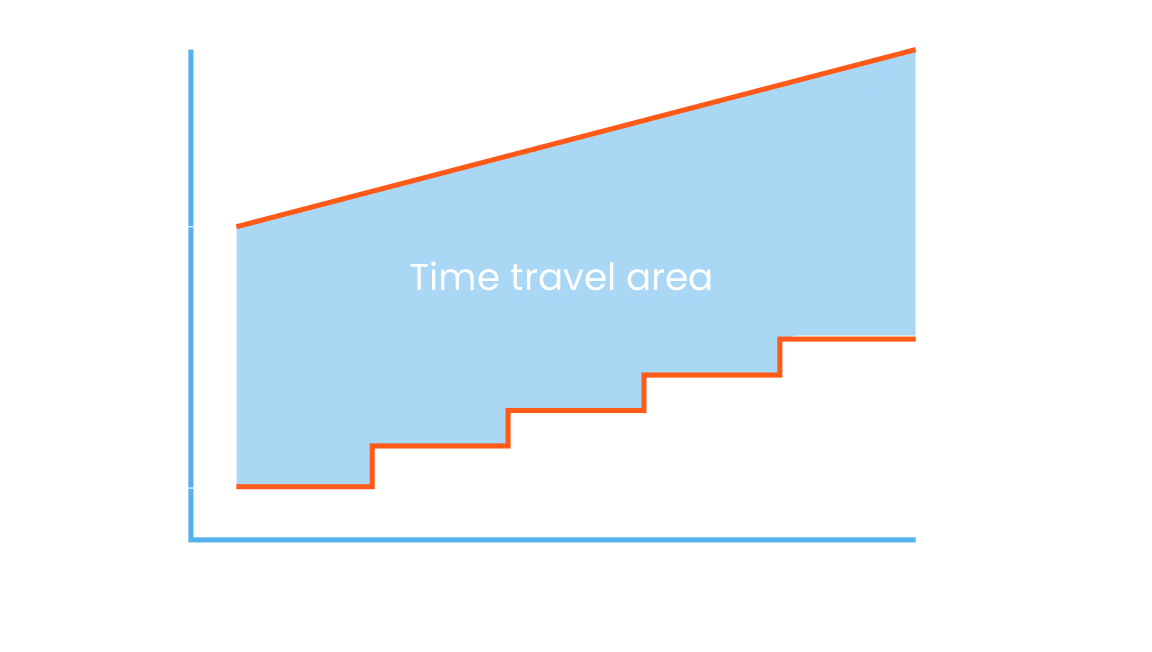

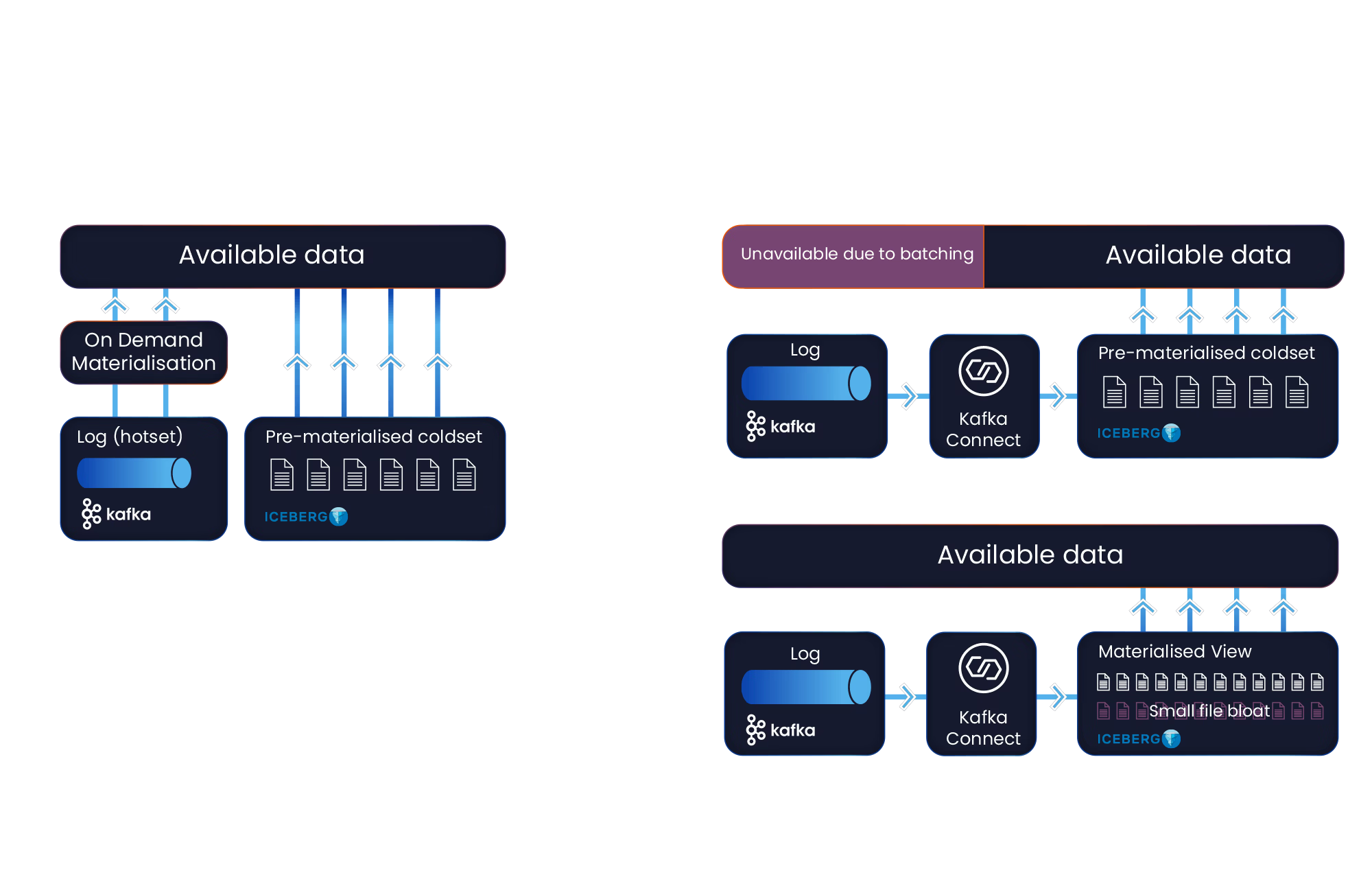

Streambased I.S.K. presents a set of Iceberg tables composed of a section of real-time data from Kafka (the “hotset“) and a section of physical Iceberg data (the “coldset“).

Tables in I.S.K. combine these two sections in a way that is completely transparent to any clients interacting with it (it just looks like a regular Iceberg table).

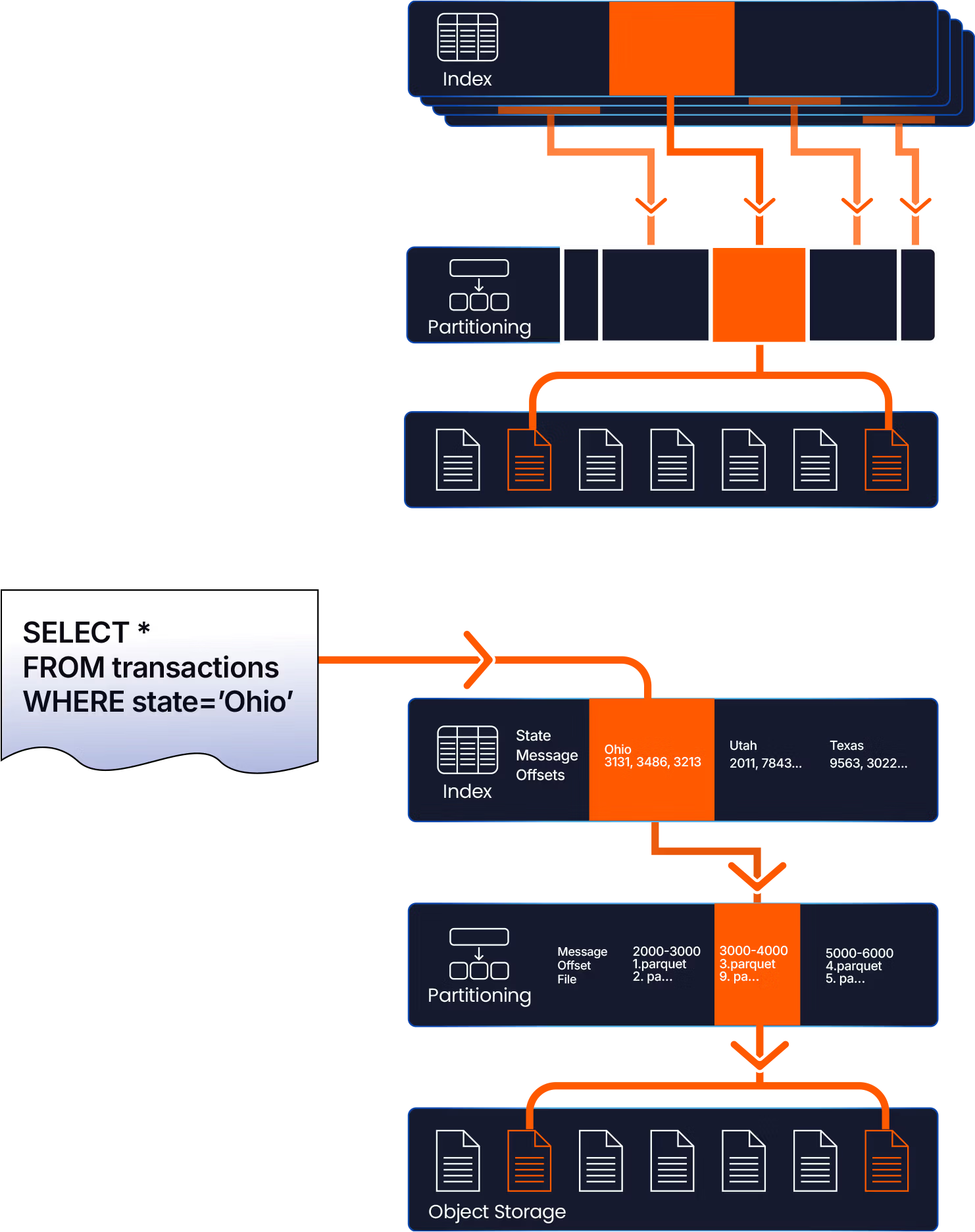

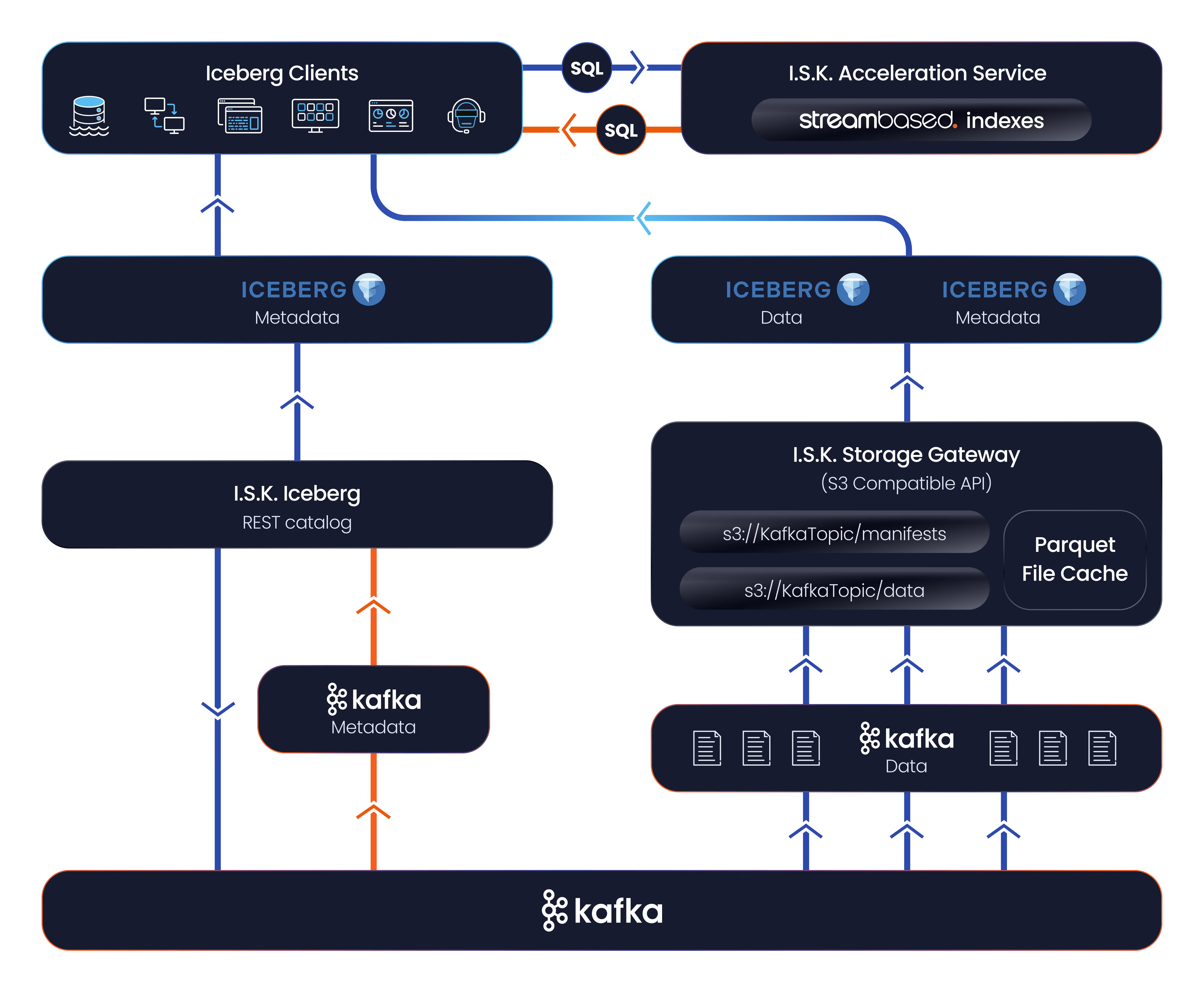

The I.S.K. architecture consists of the following components:

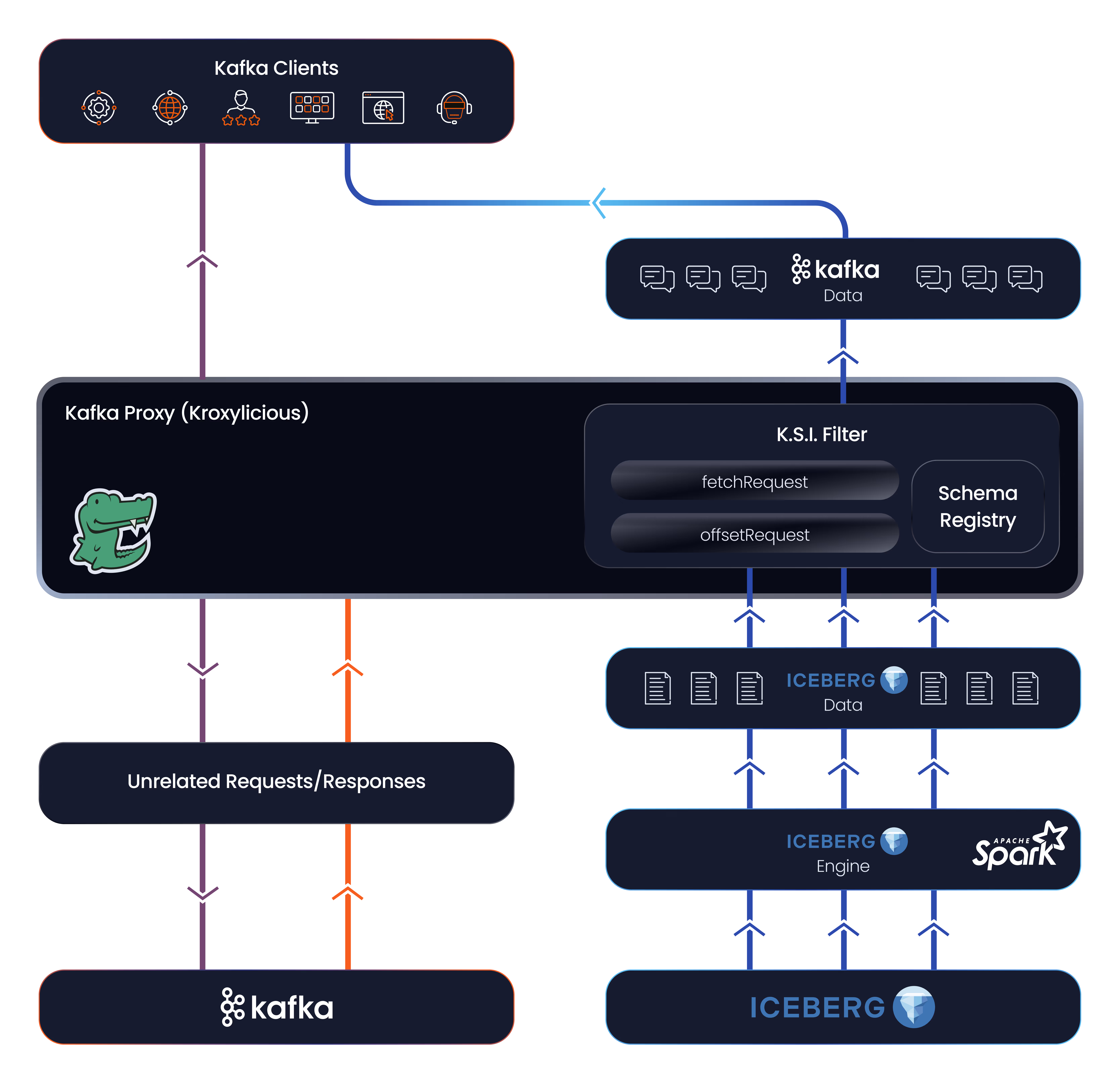

K.S.I. - One Stream Across All Time

Streambased K.S.I. presents Kafka topics composed of a “hotset” section of data served directly from Kafka and a “coldset” section served from Iceberg.

Kafka’s partition and offset concepts are mapped from columns in the Iceberg data allowing Kafka clients to interact with them as if they were Kafka topics.

The K.S.I. architecture consists of:

Let’s find the right solution for your data

We’re here to help you unlock the full potential of your streaming data. Tell us about your challenges or ideas — and let’s explore how Streambased can support your business.